2022 was the year when we all learned about generative AI. Amid this technological boom, Runway, one of the companies behind the popular AI text-to-image model called Stable Diffusion, has raised funding at a $500 million valuation. In this interview with Alejandro Matamala, we learn how, while advancements in tech reduce the gap between algorithms’ capabilities and ours, designers will have the opportunity to use their creative muscles even more.

Alejandro Matamala is a Designer, Software Developer, and Art Book Publisher from Chile, based in New York. He is the Co-founder and Head of Design at Runway, an AI-powered creative suite for editing and generating media. Previously, he was a researcher at New York University, working at the intersection of artificial intelligence and creative tools. Alejandro has worked with several commercial and cultural organizations in the past. He co-founded Material Design Studio and Ediciones Daga, an independent art book publishing house.

For those who are not familiar with Runway, what is it, and what does it do?

Runway is a full-stack applied AI research company that is opening up a new wave of storytelling. We develop AI models for content creation & content editing, and we build software that allows creative professionals to leverage these models within their workflows.

Our core product features include a full-service video editor, and a suite of over 30 AI Magic Tools, all of which live in the browser. These tools enable our users to generate and edit content from simple natural language text prompts, allowing them to streamline previously tedious and time-consuming workflows in a matter of minutes. Our ultimate goal is to help our users create more and better content, faster. Some of our AI Magic Tools include Text to 3D Textures, Infinite Images, Green Screen, Inpainting, Motion Tracking, and AI Training.

How did you come up with the idea of Runway?

My co-founders and I met at NY University while we were all in art school. We were fascinated by both creative tools and artificial intelligence. The potential of this technology seemed limitless to us, so we started building tools for various types of creators, such as designers, creative coders, and artists, and giving them a place to experiment with models. We saw a clear pattern emerge of users applying models to video editing as more people used our tools and experimented with the technology, and we saw an opportunity to lean more into creating video tools.

What were the biggest challenges in creating Runway? And what are the biggest challenges you’re facing now?

At Runway we aren’t simply building software and making UI improvements; we are inventing the models and the underlying technology in parallel. That’s why we refer to ourselves as a “full stack AI company”. We have an in-house applied research team that develops the models, and an engineering and design team that builds tools for our users that are powered by those models. Balancing all of those moving parts is challenging, but is something we’ve had a lot of practice doing over the last 4 years. It’s what enables us to build and ship new tools and features quickly.

Where do you think we are going to see AI being used in the future? Any use we don’t have or see now but which you think will emerge?

Within the creative industry specifically, I think we’re going to see Generative AI embraced more and more by the designer and filmmaking communities. The tools that already exist and are continuing to emerge not only streamline tedious workflows, but unlock so many more creative possibilities for people who previously didn’t have the tools or the knowledge. Historically, you needed to have expensive programs that require a lot of computing power and technical knowledge to operate. Now, all you need is an idea, an internet connection, and the ability to express your idea in words. These tools are helping to democratize content creation, and are helping to bring production cost and time much closer to zero. It’s not crazy to think that someday soon we’ll eventually see films that are completely generated: characters, voices, score, b-roll. Everything.

Beyond the creative industry, I think the potential for AI is infinite. With the emergence of natural language prompts as inputs, every industry can see benefits. Large language models are a good example of this: people who don’t know how to code can now produce code; people who struggle with math can now solve any math equation; people who struggle with copy can now write entire blog posts. Similarly, with Runway, anyone with an idea to create images and movies can do so now.

Your talk for Design Matters Mexico 23 is titled: “The rise of – synthetic – designers”, who are they and what do they do?

Synthetic designers are a new type of designer who work in many different fields and use AI-driven algorithms to generate new images, music, voices, videos, scripts, and environments. Their projects are created artificially using a combination of traditional and generative media, so there’s not as much of a need for physical elements like cameras or actors. Entire projects can now be generated with synthetic media. This new wave of synthetic designers is becoming more common because of new tools that are emerging and unlocking new ways of making things. As part of their skills, synthetic designers can customize tools by collecting and organizing datasets, which gives them incredible control over their outputs. Synthetic designers are enabling new forms of storytelling and opening up new markets.

Last but not least, aside from any dystopian illusion of a future where humans are replaced by automated machines, what’s your take on the impact AI tools can have on the work of the design community?

We believe that AI is simply another tool in the toolbox of artists. The goal is not to replace artists; rather, it is to provide them with tools and techniques that allow them to spend less time on the tedious aspects of their work and more time creating, as well as to encourage them to explore new creative avenues. Those are the people and artists for whom we build, and we hope to continue doing so.

Learn more

Alejandro gave a talk at the design conference Design Matters Mexico 23, which took place in Mexico City & Online, on Jan 25–26, 2023. The talk, titled “The Rise of Synthetic Designers”, explored how, as AI technology improves, a new breed of designers called “synthetic designers” will become an increasingly important role in the world of design. Join our streaming platform Design Matters + and learn from Alejandro and over 300 designers from around the world.

Follow Alejandro on Twitter, LinkedIn, and Instagram, or visit his website. Learn more about Runway and follow them on Twitter, Instagram, TikTok, or Runway Research.

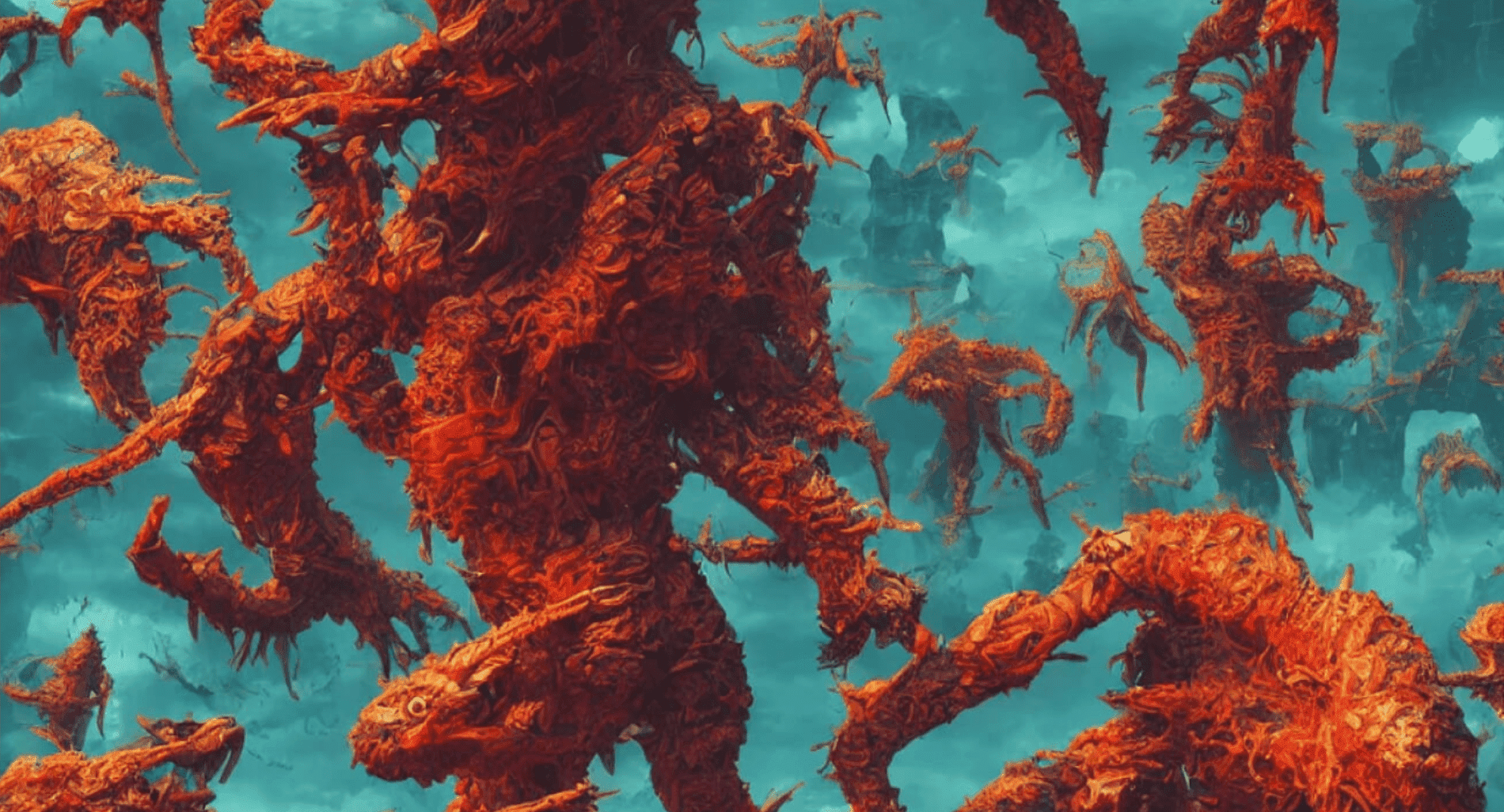

Cover photo: From High-Resolution Image Synthesis with Latent Diffusion Models, courtesy of Runway.