Ramsay Brown is the CEO of Mission Control, a company on a mission to ‘empower the world’s most successful teams to move AI faster while breaking fewer things’. Ramsay works at the intersection of artificial intelligence, neurochemistry, and human behavior. Co-Founder and former COO of Boundless Mind (formerly Dopamine Labs), where he and his team worked on persuasive AI and behavioral design, he is a NeuroTechnologist trained at USC’s Brain Architecture Center. He also worked on Brain Mapping and pioneered an app that was something like Google Maps for the Brain.

Our collective unease with the possibility of a technological dystopia may not be unfounded. This is a topic that interests many, and creeps out some: the humanization of AI. It can look like some of the systems we are creating are becoming more like us. Very recently, a Google engineer was all over the news because he believed he could prove that LaMDA is sentient, after publishing a pretty philosophical conversation he had with it. What’s your take on this?

So my background is Computational Neuroscience. I studied how the wiring of the brain between its regions forms a computer that is us, how the different regions connect, what those connections create in terms of circuits of behavior, emotions, thoughts, and feelings, how that relates to disease states, how that works in healthy people, and how you could engineer the computers. It’s worth saying that the originally stated goals of the whole field of Artificial Intelligence were to explore how digital electronics and computing machinery could be used to do the things brains do. But that was 70 years ago, so let’s not forget that we’ve had more than half a century to get here from the original stated goal of making mechanical people. This has always been the outset. Every few years, there’s a boom and bust cycle in AI; right now we’re in one that I think is going to last for quite some time.

Do you think we’ll consider AIs as equals one day?

AI systems have been built in a way that resembles how different parts of the brain are arranged and to do the tasks the brain does. These neural networks are then exposed to vast quantities of text, Subsequently they seek to pick out the statistical patterns between words and how they appear with each other. So what we get from this is a machine that appears to do language-like tasks.

One of the most complicated philosophical problems comes from a Neuroscience perspective, that is we don’t totally understand how humans do which tasks. We know the region of the brain where if you stick an electrode and stimulate it, you babble nonsensically. We know the part of the brain that if you lesion it, or you get a stroke in that region, you can never speak again. But how we do language is still an unknown thing to us. How children learn language is still unknown. And in honesty, some of the approaches that these AI systems are taking to develop language may mimic how humans develop language.

There are reasons to believe there is something children might be doing that is like this statistical inference of how words fit together, how those can create symbols in our minds, and how those symbols get trapped in our neurons. So, when this engineer says that their language model appears to be sentient -in addition to not understanding what that word really means in this context- I think what he’s trying to say is “this thing is like us and deserves grace and dignity”. We have to remember that we’re unclear about how we function as humans. So we should be cautious to dismiss claims like that one, saying that a complex system designed after our best understanding of our own principles, has appeared to begin doing things that we do. But when we say that this system can’t have certain properties, that it can’t be sentient, or that it can’t be conscious, there might be a bit of anthropocentric chauvinism on our part. To the best that we can tell, sentience may be a property of complex adaptive systems, that is emergent of the systems, and it would surprise me if we were the only existing sentient beings.

To answer it shortly: Do I think that LaMDA is sentient? No. Do I think that there’s a timeline for sentient machines? Yes. Do I think it’s going to happen sooner rather than later? Yes. Because these things are not being built out of lines of code and if-else, statements; they’re built to mimic our fundamental neuroarchitectures, and we don’t know if their sentience will be shaped like ours, we don’t know if their experience of reality will be shaped like ours, or if they will have one. So we’ll need to have hard conversations about our moral circle and where they fit in it, and what we should do to expand our moral circle so we don’t alienate a new form of intelligence that is improving faster than we are. That is going to be one of the most fascinating conversations, and every person on this call will still be young and attractive by the time we have it.

Think about it, this used to be science fiction. And now these are questions you’re asking in an interview for the design community. The reality now, is that we live in the capital of the future, we are in the timeline where our behavior is being reprogrammed at scale by algorithms. And we have to talk about whether or not machines have feelings and “human” rights. We live here, this is real now.

This makes you think about being human and question yourself at the same time…

This is where the Computational Neuroscience background informs a lot of my thinking. I think that dignity and grace are true and beautiful and that sentient and conscious beings are deserving of them.

For context, we -writ large – have a miserable track record with grace, dignity, and rights. And so when people talk about not giving rights to machines, we have to place that in context of the fact that we’ve barely given rights to certain groups of humans – which is an abominable state of affairs. In the past 100 years, we haven’t granted many groups of people rights, and we still continue to not do this.

We’re terribly bad at figuring out who deserves and who should be part of a moral circle. Case and point; we had a federal holiday yesterday in the USA to observe our incremental – and woefully shabby – racial equality progress. We still have so much progress to make.

The conversations we need to have about machines in our moral circle are becoming more pressing all the time.

How do the developments in the AI segment bridge to the daily creative work of designers out there?

It’s easy to look at another field’s tools and dismiss them as if they don’t apply here, pretending we don’t need to worry about them. But artificial intelligence has left the scientific laboratory and has entered the design workspace and the design studio in ways that we didn’t expect to happen this fast.

Let’s think about the three phases of tools in technology enablement and design over the past 50 years: in the first phase, we saw technology and computers being used as part of a design methodology. I could use a computer to do my job as a designer. With the beginning of digital authorship and digital visual editing, we began to see a second phase emerge (and I think we’re still in it): the design workflow. Not just the actual moments where I am in Figma, but the larger role I play in a design team, solving business outcome problems for clients. So we went from having tools just for the practice itself to having tools for the people. From start to finish, we got platforms that coordinate design workflows and design methodologies.

What I think we’re about to enter, and what we see coming as a third phase, is tools for tools. The design workflow of 2023 is going to increasingly involve relying on intelligence machinery as part of how designers do their job, either at the moment of creation or editing on management. I’m going to walk you through some examples in my Designer Matters 22 talk in September, but what I can say now for design professionals is that they’re about to enter one of the most exciting phases of their careers- because we are going to have an unprecedented ability to do more with less, and to do better with less, as designers. Because every stage of our workflows – from collecting requirements, and mapping services, to the actual creation of creative work – is currently being touched by artificial intelligence, the design process of tomorrow (and the very near tomorrow) may look very different than it does right now. I want to prepare designers for how to think about that process, how they can incorporate these tools and methodologies into how their work, to make the most of that and help their teams win.

Learn more at Design Matters 22

Ramsay gave a talk at the design conference Design Matters 22, which took place in Copenhagen & Online, on Sep 28-29, 2022. The talk, titled “The frog does not exist: what the enterprise needs to know about design in the age of AI”, explored how AI is disrupting design, what responsibilities designers hold, and what winning companies will do about this. Access the talk for free here.

Did you like this interview? Check out our second conversation with Ramsay Brown, where we talked about the links between design and AI, while examining the reasons why we’re glued to our phones.

Follow Ramsay Brown on Twitter and LinkedIn.

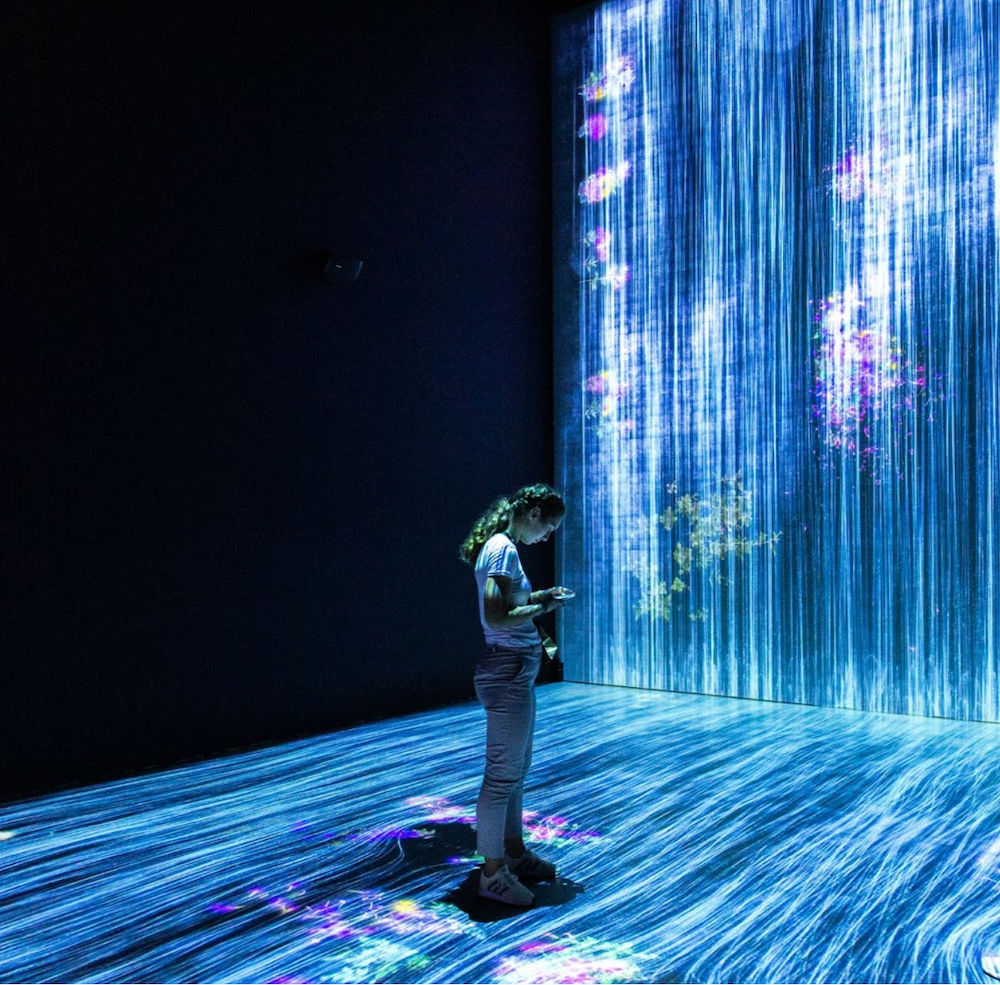

Cover image: courtesy of Andy Kelly