DM: Let’s talk about Design and Neuroscience. Can you give us an overview of how the work of designers impacts the brain of people using digital platforms?

RB: This is something I spent the better part of my early career on, as the founder of a company called Dopamine Labs. This topic will also be an important part of my talk at Design Mattes 22 in Copenhagen this fall. Our core hypothesis was: over the past 100 years, the design community has found that the methodologies and frameworks for thinking about creative goal-oriented problem solving, have been reapplied to new domains, which we previously didn’t understand could be approached through the lens of design. This has gone from very basic visual design to architectural design, new materials design, experiential design, etc. Eventually what we came to understand is that, if you’re a designer and have an understanding of how the brain operates on a first-principles basis, you now have a platform for thinking of human behavior itself as a design process. You have a constrained set of tools, known methodologies, paradigms, and frameworks. You have the outcomes you seek. At this point, you can take other design methodologies: visual design, interaction design, experience design; and on top of that (and as a designer), work using the same frameworks about the building blocks of the brain, and how behaviors and habits are triggered, especially in digital contexts. And you can approach those with the same processes and paradigms you have approached other design tasks.

Image courtesy of Ramsay Brown.

DM: What did it mean for designers to hack into the ‘golden pot of human behavior’?

RB: This was a really significant breakthrough that crashed out of the smartphone revolution because, for the first time, we all had these small, connected, supercomputers on us at all times. These devices were capable of detecting and measuring our almost every behavior, and sending them to the cloud to be used in a computation about if that was the optimal time to notify or trigger us for this type of behavior. All of this, together with the ability to push notifications down to our phones, smart watches, etc, provided the means for designers to intervene in the very makeup of daily life, and in real-time.

Some realizations around the structure of human behavior(especially when it came to habits and how behaviors increase or decrease in frequency) meant that now we had a platform for thinking through how I might, as a designer of human behavior, improve the frequency of a particular behavior. This was the very first time we got to connect all of those dots, understanding that behavior itself was something that could be designed against, that we had a language for this, and that we had tools for this now. Increasingly, we saw that companies that were all clamoring for our attention about behavior were investing in how to think about human behavior as design platforms.

DM: We’ve heard you talking about mapping the brain. Although it may sound like wizardry, for some of us there’s a growing concern over how many companies out there are investing more and more to catch our absolute attention. However, we’re not really sure about at what cost. How do you see this moving forward and who are the main players involved?

RB: I think this has been a topic that has received a lot of appropriate attention over the past few years and still is. And we see the largest players still being those digital social platforms. A few years ago, the largest players in this world were teams like Facebook and Twitter. Now I think it’s pretty easy to talk about TikTok, and their algorithm as one of the dominant forces in the use of our data. These companies shape our behavior to increase our engagement on the platform, and the amount of time we spend using their technologies.

But there’s more. For example, in the company I built and sold (Dopamine Labs) we sought to distribute and democratize the underlying technologies, and licensed them to the companies that are trying to help people become the better versions of themselves. Think of the apps on your phone that help you meditate, drive safer with fewer distractions, adhere to the diet you’re trying to work on, or remember to take medication on time. We thought those were the types of platforms that should receive this technology.

We then released free tools to help people fight tech addiction, by running those same design processes, but in reverse. Instead of saying: “how might I keep a user on the platform for an extra 30 minutes a day?” , we asked ourselves: “How am I allowing users access to the platform, but without creating an addictive tendency?”. This is the role smaller organizations like mine played.

That said, I feel we also have to admit the users’ responsibility around this as a non-trivial relationship.

DM: We have given consent to our apps, but are we aware of what is happening to us when using these digital platforms? Who is in charge here? And what is the roadmap for a future where users get a say in what happens with their own health?

RB: I’m the CEO of a venture-backed company, and I strongly understand the incentive structures I’m under, like many other executives and business leaders. I genuinely think there are very few miserably evil people in the world, while most people are just trying to do their best. The way we get to sub-optimal outcomes, for example this one where everybody is walking around with slot machines (telephones) in their pockets, happens due to a coordination failure. A scenario where independent actors like politicians, investors, project managers, engineers, designers, end-users, customers, brands, etc., all have incentives, and we all seek to independently try to get the thing they’re incentivized to get. Along the way, because it is hard to navigate several incentives at the same time -and in the absence of strong constraints- we arrive at miserably sub-optimal outcomes, that asymmetrically favor one set of incentives over the others.

I’m American, and I can say that we increasingly look to the EU for how to do this right and early. Regulation can form a set of backstops against which new incentive structures have to be organized, and at the same time, guarantee to be the social support against this problem, that otherwise would be too large.

Investing in regulation, in cultural, social, political, and economic backstops is the strategy for how you build companies meant to last a hundred years, especially today. And we’re not going to make it there if we don’t start making regulation a part of the formula. It’s a backstop. I keep going back to that word because it’s the thing we incentivize. But the reality is that there are often other types of incentive structures that can be used with companies.

“The argument that regulation limits growth appears to be falling apart, as growth that comes at any cost isn’t successful anymore. It’s a short-term pyrrhic victory for a very small handful of people.”

Ramsay Brown

Some of those other types of incentive structures come in the form of customer, user, and community backlash. But also in the shape of the behavior of investors and financial analysts, boards of directors, and other actors in the industry. I think many of the things that are going to accelerate decarbonization are the same levers we have for changing the way organizations behave when it comes to the design of human behavior.

So I’m optimistic that these things can be navigated. It’s going to take the interplay of governments, corporations, multilaterals, trade groups, unions, and community organizers. Not because they’re going to browbeat it, or do anything particularly moralizing to it, but simply because all the stakeholders can create different vectors of pressure, and then incentivize to change how these systems operate.

A lot of what we do at the AI Responsibility Lab is how those same ways of thinking are currently being used to accelerate AI safety and AI regulation, which is quickly becoming one of the largest concerns, especially in Europe. So we know these tools work, and we know that change can be done.

DM: Would you share with us some examples of how to keep pushing technological boundaries without neglecting a much-needed human-centered approach?

What I can describe are the types of policies and the types of practices that go into those tools that end up creating more responsible products

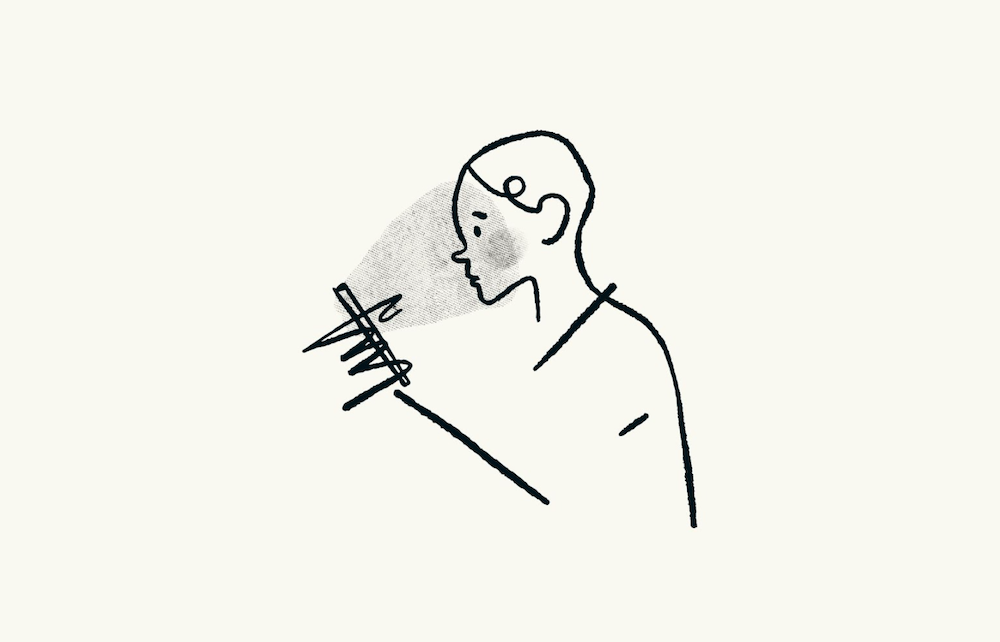

There’s been discussion over the past years within different communities, about Dark Patterns and Light Patterns in design. I think as a first principle, one of the things we distill out of that is this notion of transparency with our users, about how they and their behavior (their wants, their needs, their dreams, and their goals), are subject to design processes. In Light Patterns, interfaces, experiences, and processes are very transparent with people about the relationship they’re about to have with the technology, and how that technology may cause them to become a different version of themselves. We find that way of interacting with users to be exceptionally responsible, because you’re really giving people back a sense of autonomy.

DM: What about Dark Patterns? And how to avoid falling into them?

Dark Patterns are not transparent with the user about their presence, their methodology, or what their outcomes might be.

So if I have a product that has been demonstrated to make people unhappy the more they use it, but it makes me earn more money the more they use it (and I’m in a backhanded way referencing most of social media here), then I have a fundamental alignment challenge. This is an alignment failure that we considered an otherwise irresponsible technology.

It’s easy to think of this problem framed under the “why can’t users just stop?” idea, but there’s a network of incentives that users are under – such as wanting to remain connected to their friends, their family, their significant others, and their job perhaps. We find that not only is it harder to opt out than to opt-in, but that the companies themselves are approaching this perspective with asymmetric leverage over the user; the user has no similar leverage back over the company. So if I use Facebook, Facebook knows more about me and has more available computational power than I have the means to push back against that. This is an unfair fight for attention, autonomy, and persuasion. And because Facebook is not incentivized to be very transparent about all of those methodologies and processes, they’re able to continue to invest in that leverage for business reasons, even though it is very bad for me as a user.

The first principle of designing ethical digital products is transparency. The second principle is alignment. And the third is behavioral design having a duty to the larger conversations about which sort of future we’d seek to invent, and the role that we would play to build better worlds, and whether or not we have an obligation to care, our current or future selves and those yet unborn.

This can easily sound like the future of humanity is resting in the hands of designers…

In a series of talks I gave back in 2015, I referenced design as a superpower, since a designer can potentially meaningfully manipulate data and technology at scale larger than ever before. If I create a very successful consumer-facing technology, which spreads like wildfire, now I have a small portion of every living human’s attention span that I can use for almost any end… That’s an unprecedented quantity of power.

It’s already in the design community how we designers have ethical and responsible imperatives regarding how we use this new superpower of shaping human behavior. Especially now with AI coming into design, there are things we’re going to do, and there are things we’re not going to do. So at the end of the day we are inventing the future and have a way to shape what’s coming.

Every time we say “I don’t care”, “but the pay is good”, “I know it’s not something I should be doing, but I can’t say no to the process”,we are compromising our moral compass; we are inventing a shittier future and we’re inventing a suboptimal outcome. We need to keep in mind that no one is going to build a better world but us. We as a community have never had a greater amount of leverage over the future like we do today; and to me, that’s extremely inspiring. That means we have to take our future in our hands, but it requires taking seriously the responsibility that comes with that power.

Learn more at Design Matters 22

Ramsay gave a talk at the design conference Design Matters 22, which took place in Copenhagen & Online, on Sep 28-29, 2022. The talk, titled “The frog does not exist: what the enterprise needs to know about design in the age of AI”, explored how AI is disrupting design, what responsibilities designers hold, and what winning companies will do about this. Access the talk for free here.

Liked this interview? Check out our first conversation with Ramsay Brown, where we take a more in-depth look at the purpose of Artificial Intelligence

Follow Ramsay Brown on Twitter and LinkedIn.

Cover image: Courtesy of Ramsay Brown